AI writes the code now. Does your testing strategy need to change?

When humans coded, the "cost of writing tests" was the central constraint. Tests take time, so focus on the highest ROI layer — that was the conventional wisdom. Then AI started writing code. That cost structure flipped.

Here's how to rethink your testing strategy for the AI era, starting from the Testing Trophy.

In 2018, Kent C. Dodds introduced the "Testing Trophy." It started from a single line by Guillermo Rauch (creator of Socket.io and Next.js).

"Write tests. Not too many. Mostly integration."

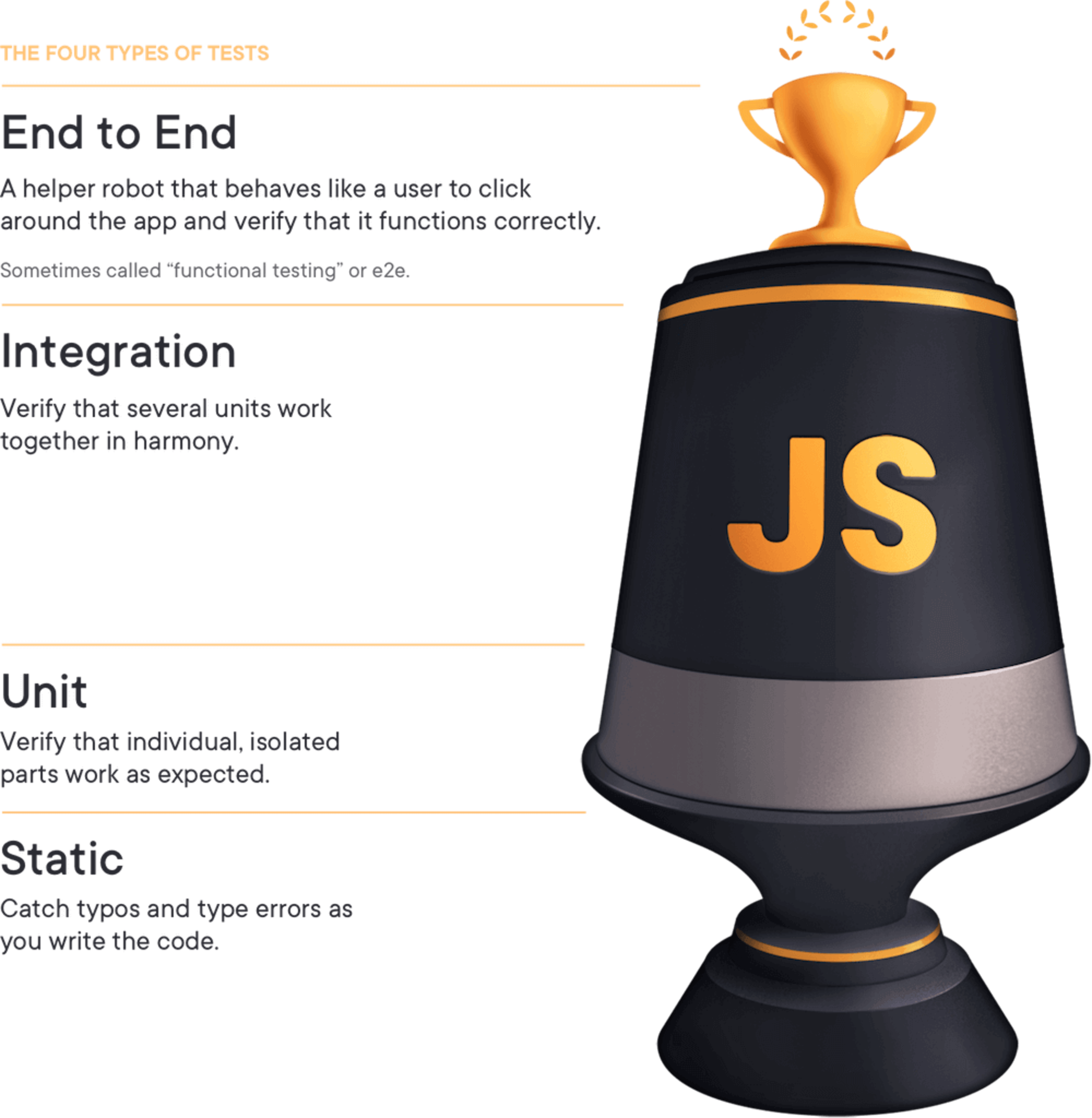

The Testing Trophy has 4 layers.

Bottom to top: Static, Unit, Integration, E2E. Trophy shape means the middle (Integration) is widest. Most of your tests should be there.

Before the Trophy, the "Testing Pyramid" was standard — proposed by Mike Cohn in 2009 and formalized by Martin Fowler. A pyramid with Unit tests as the wide base.

| Testing Pyramid (2009) | Testing Trophy (2018) | |

|---|---|---|

| Widest layer | Unit | Integration |

| Includes Static | No | Yes (base layer) |

| Core philosophy | Many fast, isolated tests | Tests that resemble actual usage |

Kent C. Dodds' core principle:

"The more your tests resemble the way your software is used, the more confidence they can give you."

Unit tests verify one isolated function. But users don't call functions directly. They click buttons, submit forms, check results. Integration tests are closer to that pattern.

The reason the Trophy emphasizes Integration: confidence relative to cost.

Higher up the stack (E2E → Integration → Unit → Static) means higher execution cost.

Test writing + execution cost

Conclusion: focus on Integration — best ROI.

Unit tests are particularly painful in frontend. UI changes too fast. Change button text and 5 unit tests break. The cost of maintaining the tests exceeds the value they provide.

One assumption underneath all of this: humans write the tests manually.

AI coding tools (Claude Code, Cursor, GitHub Copilot) made the "cost of writing tests" collapse.

Real numbers:

Human writing

1x

Baseline speed

$$

Engineer time = cost

Not too many

Constrained by cost

AI writing

9x

Generation speed

<$1

Cost to generate 44 E2E tests

No constraint

Cost → 0

Time it takes a human to write one unit test — AI generates the full test suite. Cost approaching zero makes "Not too many" meaningless as a constraint.

Strategy has to change.

When AI codes, unit and integration tests aren't a separate effort. They come out alongside the code. No need to consciously decide "I should write tests." Just tell AI "write tests too" and done.

Here's the interesting shift. When AI writes the code, mock-based E2E tests are actually more efficient.

Why mock? Real E2E is flaky because of external service dependencies. Mocking isolates external dependencies while still covering a wide range. And critically, you can replace mocks with real E2E later. Just remove the mocks. Mock E2E is a stepping stone to real E2E.

When humans coded, E2E was too expensive to write — Integration was the compromise. When AI codes, that cost barrier disappears. No reason to compromise anymore.

No matter how good mock E2E gets, there's one layer it absolutely cannot replace. The very bottom of the Trophy: Static tests.

Static tests are errors caught without running code. Three specific things:

Why can't E2E replace it? Fundamentally, the scope of what's checked is different.

E2E tests only verify execution paths. Only the code that the test scenario actually touches. Static analysis checks every file in the project, without running anything, all at once.

Example:

async function fetchUser(id: string) {

const response = await fetch(`/api/users/${id}`);

response.json(); // Promise not awaited

}This code can pass E2E tests. If the response is fast enough, the timing issue won't show up. But the no-floating-promises ESLint rule catches it immediately. It structurally detects that a floating Promise with no await is unhandleable.

TypeScript and ESLint are complementary.

| TypeScript | ESLint | |

|---|---|---|

| Catches | Type mismatches, non-existent properties | Logical anti-patterns, code quality issues |

| Example | Assigning string to number | Unhandled Promise, unsafe any usage |

| Scope | Errors within the type system | Type-safe but logically broken code |

Rules like no-floating-promises, no-unsafe-member-access, unbound-method catch code that passes type checking but blows up at runtime.

The key insight: the ROI of static testing actually increases in the AI era.

The faster AI generates code in bulk, the more important the safety net that structurally validates it. E2E checks "does it work?" Static checks "is it written correctly?" They're not substitutes — they're complements.

The progression so far:

Both ends (Static, E2E) get wider. The middle (Unit, Integration) gets narrower.

That shape — sound familiar?

An hourglass.

| Era | Shape | Widest layer | Assumption |

|---|---|---|---|

| 2009 manual coding | Pyramid ▲ | Unit | Human writes, focus on fast tests |

| 2018 modern FE | Trophy 🏆 | Integration | Human writes, focus on ROI |

| 2025 AI coding | Hourglass ⏳ | Static + E2E | AI writes, maximize both ends |

Unit and Integration don't disappear. They become the layer AI fills in naturally while coding. The human strategy focuses on the two ends.

Bottom (Static): Safety net that runs automatically once configured. Maintenance cost nearly zero.

Top (E2E): AI generates at low cost in bulk. Start with mocks, swap to real E2E later.

Middle (Unit/Integration): AI's byproduct. No separate strategy needed.

Static testing matters. But how do you actually set it up?

There's a common mistake. Asking AI like this:

"Recommend some ESLint rules."

AI gives generic, safe suggestions. No project context. Could recommend Next.js plugins for a React Native project, or a ruleset so strict it kills productivity.

Do this instead:

Generic question

"Recommend some ESLint rules."

Criteria-enriched question

"Recommend ESLint plugins for this React Native project."

Key: explicitly define the criteria.

AI reasons to match the criteria given. No criteria means AI defaults to its own baseline. Specific criteria means precise results. Same AI, same question — quality of output depends entirely on how well you defined the criteria.

Go one step further: multi-agent research.

One AI gives one perspective. Multiple agents investigating different angles and synthesizing reduces bias. "Agent A researches npm trends, Agent B analyzes GitHub issues, Agent C collects community reviews — compile a full report." That's the move.

One question difference can change the entire code quality foundation of your project.

Static testing is set up. Next question: when do you run it?

Humans coding in an IDE get red squiggles in real time. ESLint and TypeScript watching files in the background, flagging issues as they appear.

That works because humans use an IDE.

AI coding agents (Claude Code, etc.) bypass the IDE. They modify files directly in the terminal. No real-time editor linting.

Two options:

1. Catch in real time: Run lint every time a file is modified.

2. Catch in batch: Run all at once after coding is done.

Each has its best use case.

Real-time is better when: You don't know the scope of changes ahead of time. Change a props type and errors propagate to every file that imports it. Hunting them down one by one with lint after the fact is tedious.

Batch is better when: You already know the scope. Refactoring where you know which files change — run lint once at the end.

In AI coding, batch is more practical. Simple reason: when AI gets error feedback, it fixes things on its own. Even if cross-file errors propagate, just say "fix all lint errors" and AI handles it.

Claude Code has Hooks — commands that run automatically at specific points.

The Stop Hook fires right before Claude finishes a task and says "done." Hook lint and type check here, and they run all at once when coding finishes.

{

"hooks": {

"Stop": [

{

"matcher": "",

"hooks": [

{

"type": "command",

"command": "npx tsc --noEmit && npx eslint . --ext .ts,.tsx",

"timeout": 60

}

]

}

]

}

}What this config does is simple.

The Stop Hook does what the IDE's red squiggles used to do.

PostToolUse Hook lets you check after every file modification. But Stop Hook is sufficient for most cases. Running lint between every file modification during AI coding is inefficient. Run it all at once when done — if there are errors, AI fixes them all at once. Cleaner.

The full picture:

Testing Trophy (human era): Writing tests is expensive, so focus on Integration — best ROI.

Testing Hourglass (AI era): Writing tests costs nothing, so maximize both ends.

The new bottleneck isn't "writing cost" — it's "maintenance cost." Static testing has near-zero maintenance cost. Configure once, done. Unless you change the rules, it keeps running on its own.

Pyramid was the answer for the manual testing era.

Trophy was the answer for modern frontend.

Hourglass is the answer for AI coding.

When tools change, strategy has to change. Redraw your testing strategy as an hourglass.

If you want to explore these shifts together, sign up. I'll keep sharing what I find from actually using these tools.